From Scratch: MNIST Digit Recognizer

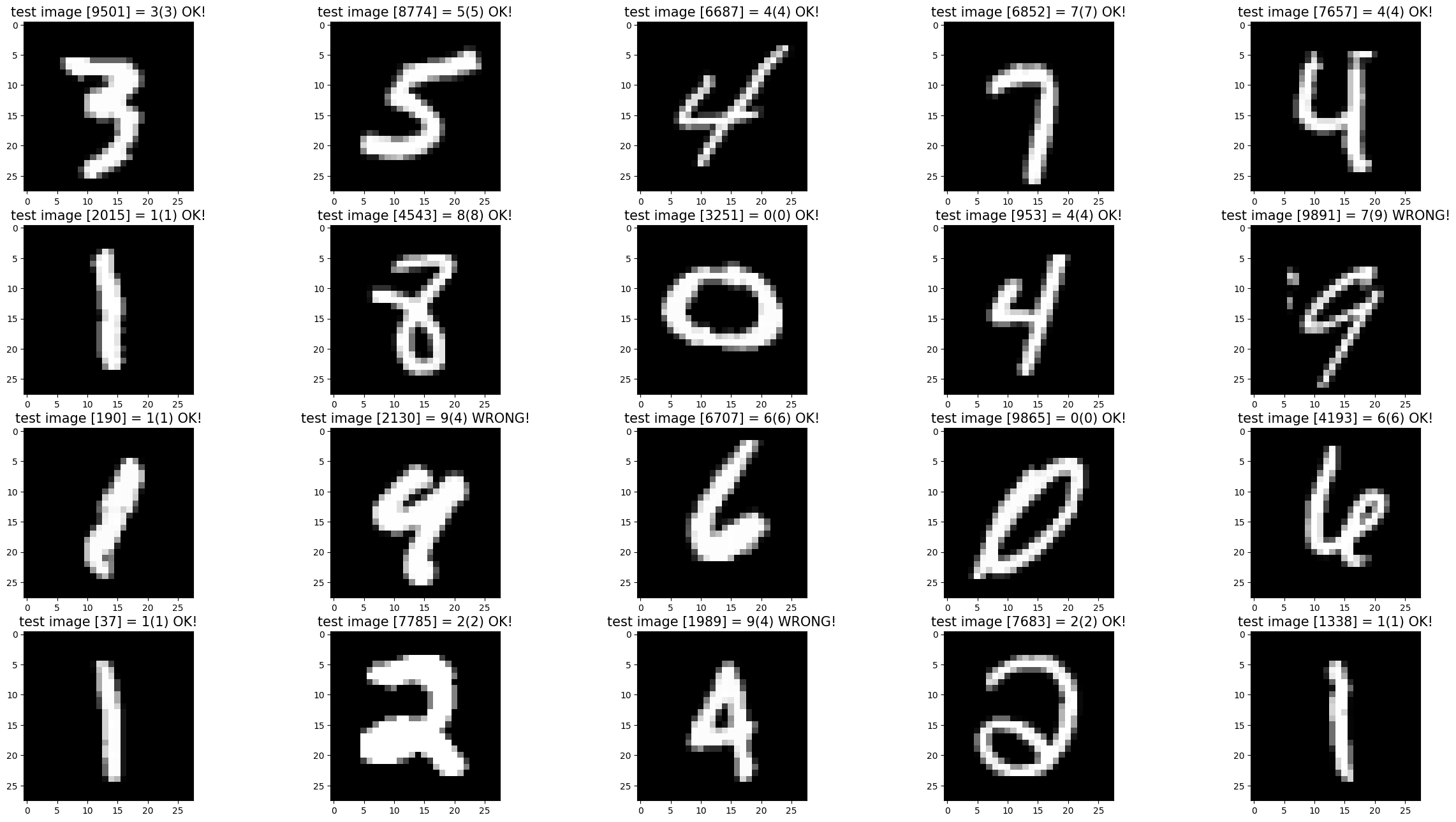

I implemented a simple 3-layer neural network (~80% test accuracy) for recognizing digits from the MNIST dataset, without using any ML libraries! This was mainly inspired by the great explainer video by Samson Zhang.

Neural Networks, Put Simply*

*This was my first time dealing with neural networks, so here I’ll try to describe what it is and how it works to the best of my ability.

A neural network can be treated like a computational analog to the human brain. Of course, it is far more complicated than that, but at its core it shares 2 main features:

-

It’s made out of “neurons” that connect to one another, and

-

It is capable to learn how to perform a certain task.

If you are familiar with graphs in computer science, you can think of a neural network as a bunch of interconnected nodes called “neurons”. These nodes are arranged in succeeding layers, and each “connection” represents some kind of mathematical function that operates on the preceeding layers. These functions consist of applying weights an biases, followed by an activation function. The numbers associated with the connections in the neural network are what we change during training.

We can train these neural networks through various methods, but a common one is through something called supervised learning. This is especially good for a classification task, like digit recognition. Put simply, we feed the network a lot of data with corresponding labels. we then iteratively do the following:

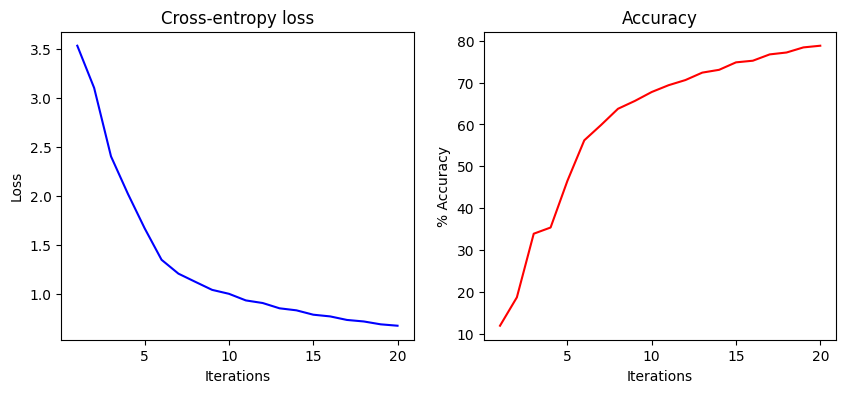

- Do a prediction using the existing weights and biases. This is called forward propagation.

- Calculate the model’s loss, i.e., some formula for describing how far off the predictions are from the actual value.

- Nudge the weights and biases in a direction to decrease the loss. This can be done through back propagation and the concept of gradient descent.

After a some iterations (20~100) and at an appropriate learning rate, the neural network can “learn” how to recognize digits (modelled through the learned weights and biases)

Brief technical details

The training data consists of 26-by-26 pixel images from the MNIST Dataset on Kaggle. Images were read using a sample from Read MNIST Dataset.

The neural network I built follows a 3-layer architecture that is full-batch trained:

- Input Layer - a matrix (784 x ). Each column represents the image flattened data of one image

- Hidden Layer - a matrix (128 x 1). Activation function: ReLU

- Output Layer - a matrix (10 x 1). Each node represents the probability that the image corresponds to a specific digit category. Activation function: softmax.

For the equations used in the implementation, see the documentation on the Jupyter notebook.

For training, I did iterations at a learning rate . The model showed a test accuracy of 80.57%.